Time Shift

Cameron Allen

Time Shift is a song created via Ableton Live, an application for creating music, and is inspired by modern day techno music. I created 3 programs via Max for Live (a coding platform for Ableton) that allowed me to manipulate the sound and midi notes from the song. One of the programs was used to create a harmony from the notes played. The second program was used to pitch shift the played notes. I programmed the pitch to shift based on the location of my mouse on the computer screen. The final program was used to modify the amplitude of incoming sounds. Each time the amplitude was modified, it would quickly replay the sound. This would cause a sound effect that is reminiscent of a wood pecker pecking. Because of these programs, I was able to create some unique sounds, such as the sounds present in the intro to the song.

Dance to the Beat

Amanda Bermel

My work, Dance to the Beat, consists of both a software application and a song that I composed. The software allows users to play the song that I wrote and experience different characters dancing to my song. Users can also add visual effects which overlay the video and match the waves from the audio. They can select a character, for instance, musical artist Childish Gambino, and watch the character dancing from the 4 “read” message buttons. From the four drop down menu options the user can select any of the symbols in order to modify the effects being displayed in the video. One screen displays the image with the effects caused by the audio wave and the other screen just displays the audio wave. In the upper left section users can stop or play the song, stop or start the effects, and stop or play the video itself. The song I composed was created using Ableton and Max for live — musical software. I created various Max patches and utilized them as instruments in my song. I then added Ableton effects to the song and added some pre-existing instruments into the song. I also adjust specific settings on each sound for example pitch and volume.

Spacing Out

Rachel Bloom, Cassidy Carson, Garrett Web

Spacing Out is a work for fixed audiovisual media, in which 3D objects morph and change in response to our sounding work. We created the work via an experimental approach to the progression of our project. We found that trying new things with the tools given (that is, a visual coding language for the arts, Max 8, and professional music software, Ableton) was what allowed us to make a product we could be happy with. Garrett focused on the creation of our three main audio effect plugins using Max for Live, a musical software technology that integrates the above. Each effect is unique. Cassidy created a portion of our piece using FM synthesis, a way of creating new sounds and timbres, as well as a virtual instrument and a small audio-reactive visual animation effect. Rachel created a larger-scale audio-reactive visual animation effect.

Auto Tune in Max

Cole Deters

Auto Tune in Max is a simple form of Auto Tune. Auto Tune is music software that will process any note played or sung into a microphone and convert that note into the closest note of the musical scale of your choice. This main deliverable of Auto Tune in Max is a Max Audio Effect plug-in for the Ableton Live Software. The work can be demonstrated in a live installation but it would need a working microphone, laptop, and headphones. Auto Tune in Max is important to people as it gives them a taste of Auto Tune that is gaining widespread usage in popular music

Humanities Impact

Andy Gambini

Humanities Impact, an open work coded and written by Andy Gambini, is a work meant to inspire the player to look at how Earth is changing and hopefully act afterwards. With the time-lapse footage provided, the player will interpret how the loss of tress in the Amazon or Ice in the Arctic as their sheet music. After playing, I hope the musician realizes the scale at which humans have altered the Earth. Starting in the Key of C and modulating over the course of the piece to F# Major Pentatonic (all black keys) to symbolize ice melting because of increased CO2 emissions, the player will use a MIDI Keyboard instrument and coded string sound, altering the amplitude with their mouse to do so.

Sargeray

Muzahir Hussain

Sargeray is a three minutes long Balochi song in which I have used an audio file to record my midi notes along with it to make a song. This audio file is a recording of my friend Mahgunag singing, who lives in Baluchistan, Pakistan. The midi notes played in this song are of Balochi musical instruments Nall (end blown flute) and Dambura (string instrument which is played like guitar). I explored the Kontakt factory library, a collection of instrumental audio samples, and searched for instruments that would make alike MIDI notes as these instruments since Balochi musical instruments are not available in Kontakt library.

SenStation app

Sisi Kang

The SenStation app is an application that utilizes the concept of open work so that users can have interaction from different senses to make music and alter sounds. The different functions include recording the user’s own sound, a preloaded set of beats, and also utilize a camera so that users can use motion to manipulate synth sounds. Users can save different presets and alter pitches and speed to certain sounds to their preference to make this openwork. New technologies, especially digital music and sound manipulation enabled innovation that allows various forms and levels of creativity. SenStation app aims to engage the users to engage, make, and enjoy sound and music.

ThReemix

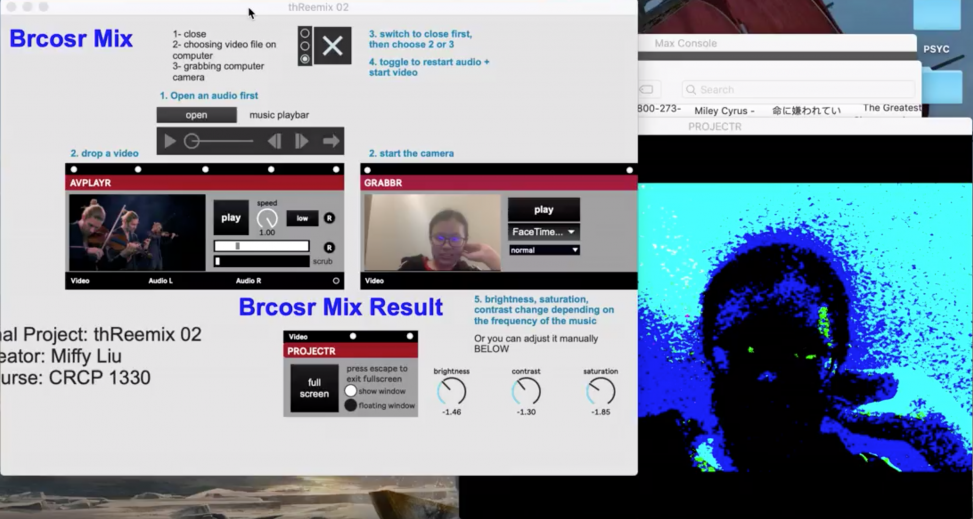

Miffy Liu

ThReemix is a project that allows users to apply audio-responsive filters altering the overall appearance of a video according to the frequency change in audio input. The video input can be either a preexisting video with mp4 format, or a real-time camera capture on the computer. The audio input can be any audio in mp3 format. The output video can be viewed either as a pop window or a screen in the project; the user can customize their dimensions. I split my project into two stand-alone applications, thReemix 01 and 02. ThReemix 01 has two mixes: signal mix, which filters a video with spectrums that pump in the pattern of the audio input’s frequency; and also 3D signal mix, which displaces the skin of a 3D object with the video, and bump the object in the pattern of the frequency as well. ThReemix 02 has 1 mix: brcosr mix, which alters the brightness, saturation, and contrast base on the frequency change in the audio input.

Sound Interaction

Yuhang Liu

Sound Interaction is a project that realizes the interaction between sounds and 3D models, images and videos. First, users can click the button “Start/Stop” to start or stop the project. When clicking the “read image” and “read 3DTexture”, users can separately load in any two images from your computer, which can be the same or different. Next, when clicking the “read video”, users can load in any video that you want. In addition, users can choose any sample shapes you like through clicking the buttons of different sample shapes. By clicking “texture VT” and “texture IMG” users can add the selected images or videos as a texture to the sample shape. Users can now load in a 3D model by clicking “load3DModel”, and by clicking “texture VT3D”and “texture 3DModel”, the texture can be added on the model with the video or image that you select in the same way. The button “read audio” enables you to add a sound. Next, when users click “open sound” and “play sound”, they will see the sample shape changing its mode according to the sound playing. Users can also click the button on the right side to change the modes. At the same time, the speed and angle of the rotation can be changed by using the mouse.

Amid

Diana Vu

The inspiration for Amid was based off the current situation we are in. Being quarantined at home, and no where to go, there are a lot of emotions that everyone experiences differently. Whether they are from anxiety, joy, boredom, doom, etc., the purpose of this project was to create an outlet for these emotions. Since “online” has become the new norm, this project can be distributed to anyone who wishes to lash out on their laptop. Literally.

Amid turns your laptop into a musical instrument. By pressing the keys stated in the instructions, a new sound will emerge and by moving the mouse horizontally, the volume can be changed.

Another feature to Amid is the visuals. Once the visuals are toggled on by the user, they have the option to keep it in a tiny preview window or hit ‘esc’ to make it full screen. The visuals will change every time the user plays a new key and change colors.

Amid turns your laptop into a musical instrument. By pressing the keys stated in the instructions, a new sound will emerge and by moving the mouse horizontally, the volume can be changed.

Another feature to Amid is the visuals. Once the visuals are toggled on by the user, they have the option to keep it in a tiny preview window or hit ‘esc’ to make it full screen. The visuals will change every time the user plays a new key and change colors.

A WordPress Commenter

Hi, this is a comment.

To get started with moderating, editing, and deleting comments, please visit the Comments screen in the dashboard.

Commenter avatars come from Gravatar.